Abstract

Background

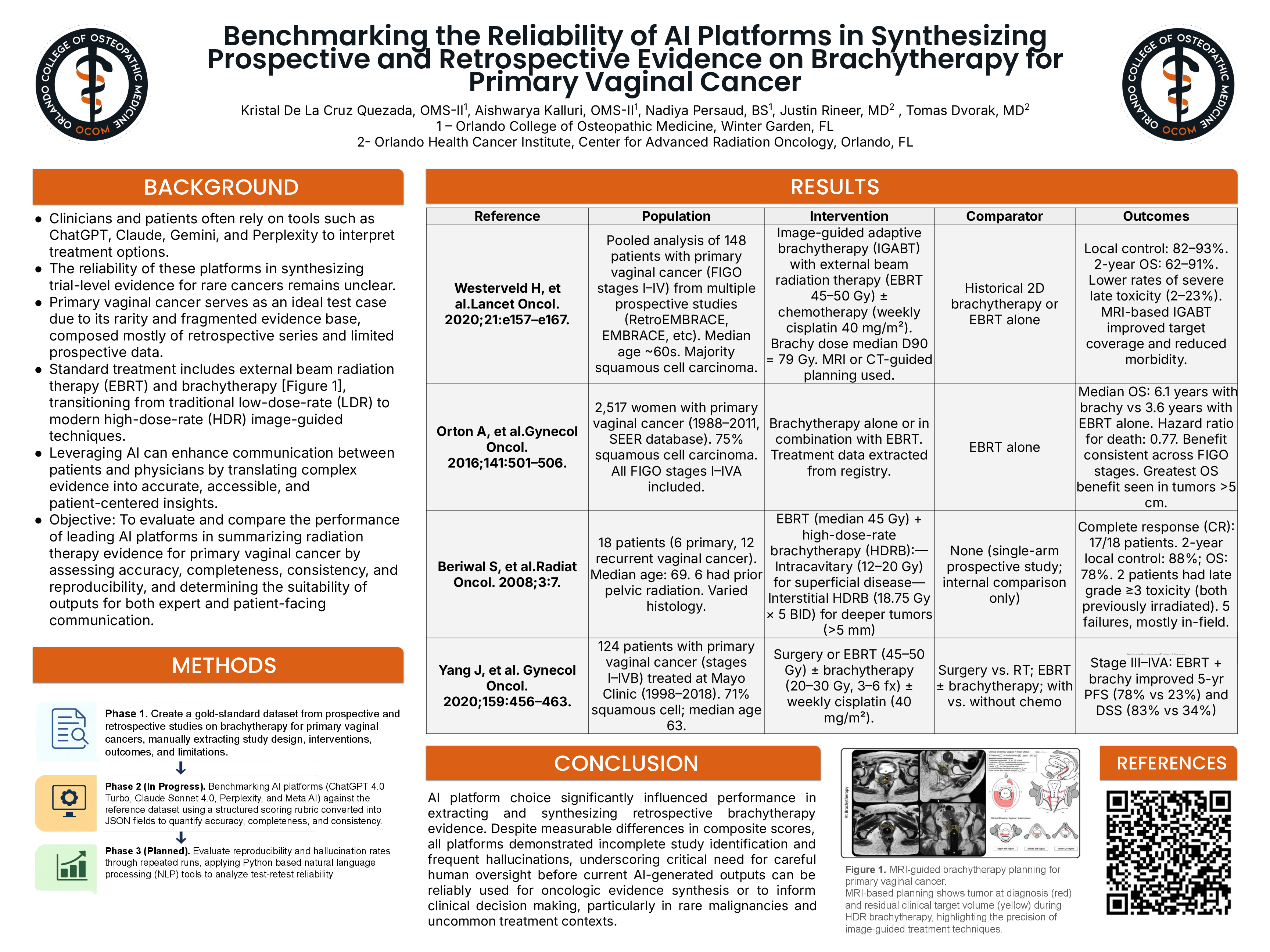

Artificial intelligence (AI) platforms such as ChatGPT, Claude, Gemini, and Perplexity are increasingly used to interpret and summarize oncologic evidence. However, their reliability in synthesizing trial-level data for rare malignancies remains uncertain. Primary vaginal cancer, characterized by limited prospective studies and reliance on retrospective data, provides a valuable model to evaluate AI performance.

Methods

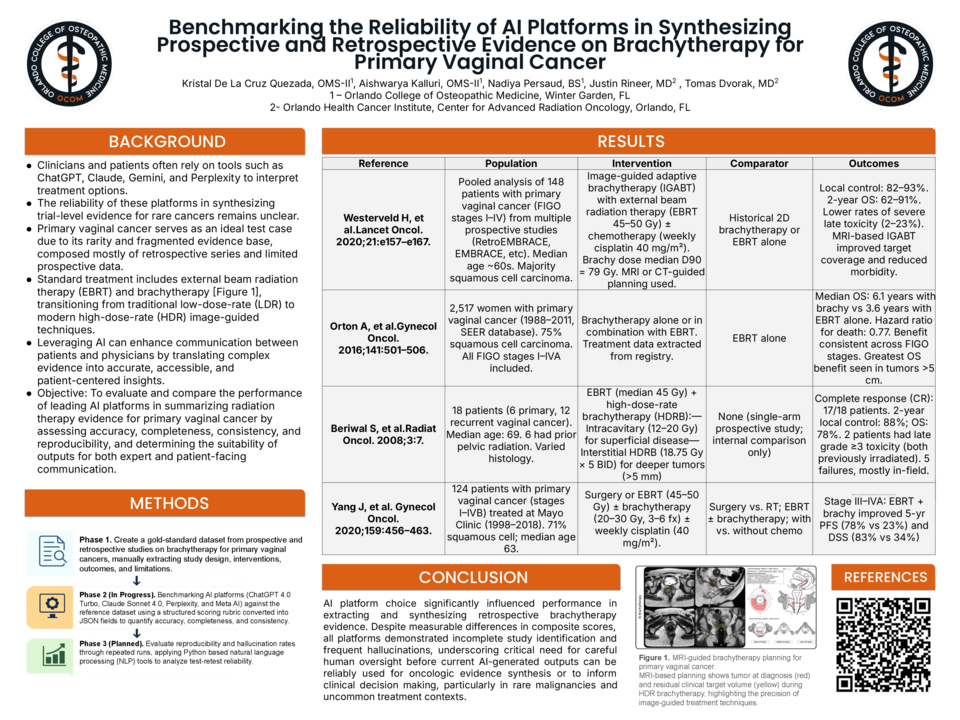

A multi-phase study was conducted. First, a gold-standard dataset was developed from prospective and retrospective studies on brachytherapy for primary vaginal cancer, with manual extraction of study design, interventions, outcomes, and limitations. AI platforms (ChatGPT-4 Turbo, Claude Sonnet 4.0, Perplexity, and Meta AI) were then evaluated using a structured scoring rubric assessing accuracy, completeness, and consistency. Future analysis will assess reproducibility and hallucination rates using repeated outputs and natural language processing–based methods.

Results

AI platform performance varied, with measurable differences in composite scores across models. All platforms demonstrated incomplete identification of relevant studies and frequent hallucinations when synthesizing evidence. Limitations were more pronounced when summarizing heterogeneous retrospective datasets, highlighting challenges in accurately integrating fragmented clinical evidence.

Conclusion

AI platforms demonstrate variable and currently limited reliability in synthesizing oncologic evidence for rare cancers. While they offer potential for improving accessibility of complex data, significant concerns regarding accuracy and completeness persist. Human oversight remains essential before integrating AI-generated outputs into clinical decision-making or research workflows.