Abstract

Background: Skin quality is intricately linked to a patient's overall health and a key target in facial aesthetics, yet its clinical assessment lacks standardized evaluation methods and remains subjective [1]. In aesthetic surgery and dermatology, assessment of skin features such as wrinkles, texture, and pigmentation are physician judged and can vary between evaluators [2]. Recent advances in artificial intelligence (AI) have demonstrated potential for objective image-based patient analysis, creating an opportunity to enhance quantitative assessment to support clinical decision making and patient satisfaction [2,3]. This study evaluates the diagnostic performance of multiple AI systems by comparing their patient assessments with physicians, exploring AI as a standardized tool to support physician facial analysis.

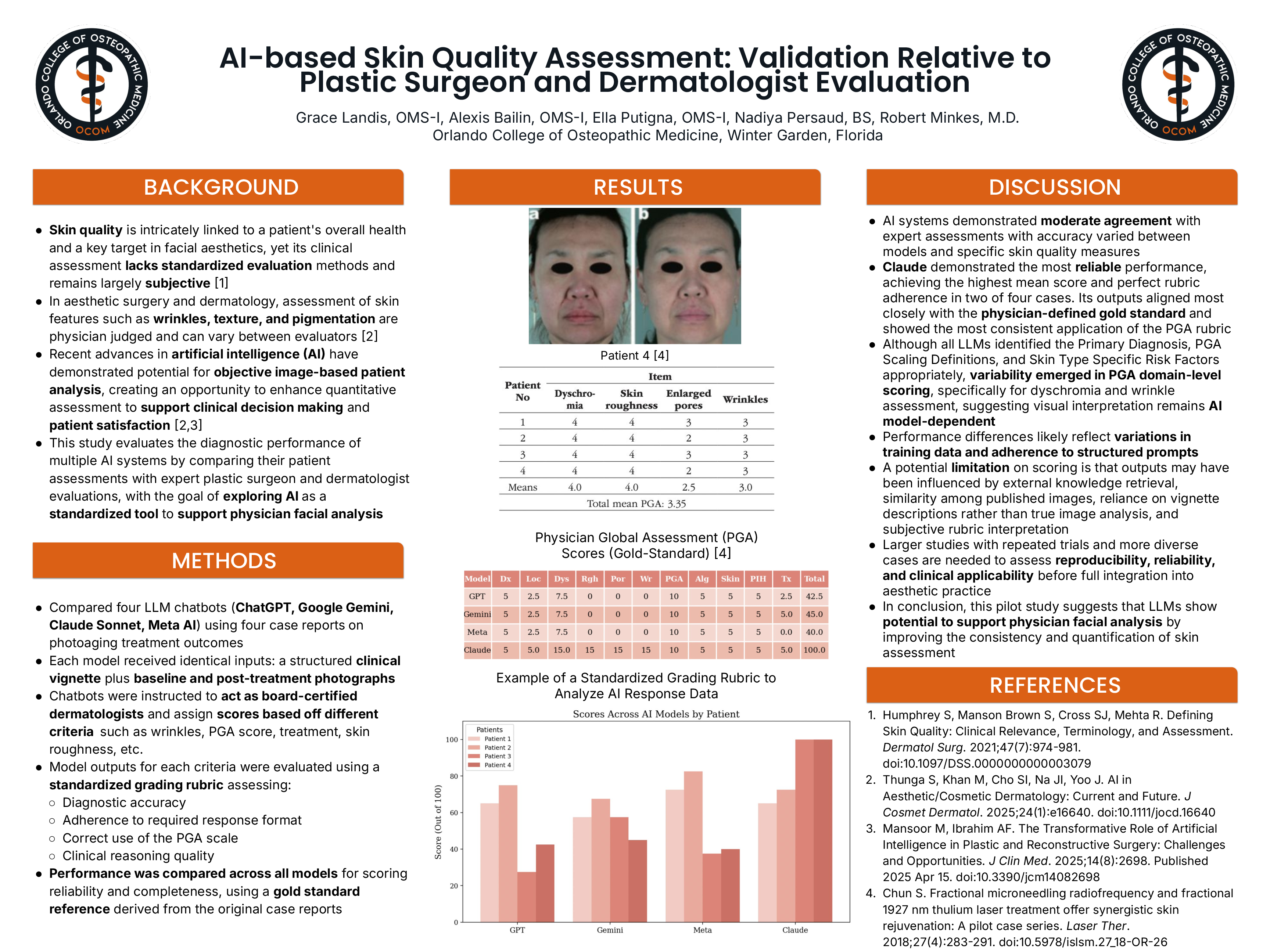

Methods: This study evaluated four large language model (LLM) chatbots (ChatGPT, Gemini, Claude, and Meta AI) using four case reports focused on treating photoaging. Each chatbot was provided with identical inputs consisting of a structured clinical vignette and paired baseline and post-treatment photographs. Models were instructed to act as board certified dermatologists and assign Physician Global Assessment (PGA) scores using a predefined 0-4 improvement scale [4]. Outputs were evaluated using a standardized grading rubric assessing diagnostic accuracy, adherence to a required response format, correct application of the PGA scale, and clinical reasoning. Model performance was compared to assess scoring reliability against a gold standard reference from the original case report.

Results: Overall mean performance scores were highest for Claude (84.38), followed by Meta AI (58.13), Gemini (56.88), and ChatGPT (52.50). Across all LLMs, Primary Diagnosis, PGA Scaling Definitions, and Skin Type Specific Risk Factors were identified accurately. Data showed strong agreement regarding Alignment Between Narrative Description and Assigned Scores. Variability was demonstrated in PGA Domain Scoring between different criteria.

Discussion: AI systems demonstrated moderate agreement with physician assessments, with Claude showing the closest alignment to the physician-defined gold standard. Although all LLMs accurately identified three criteria, as detailed in the results, PGA scores varied, particularly for dyschromia and wrinkle assessment, indicating that visual interpretation remains AI model dependent. Scoring may have been influenced by external knowledge retrieval, similarities to published images, reliance on clinical vignettes rather than true image analysis, and subjective rubric interpretation. Larger studies with repeated trials and more diverse cases are necessary to determine reproducibility, reliability, and clinical applicability before full integration into practice. Overall, this pilot study suggests that LLMs have potential to support physician facial analysis by enhancing the consistency and quantification of skin assessment.